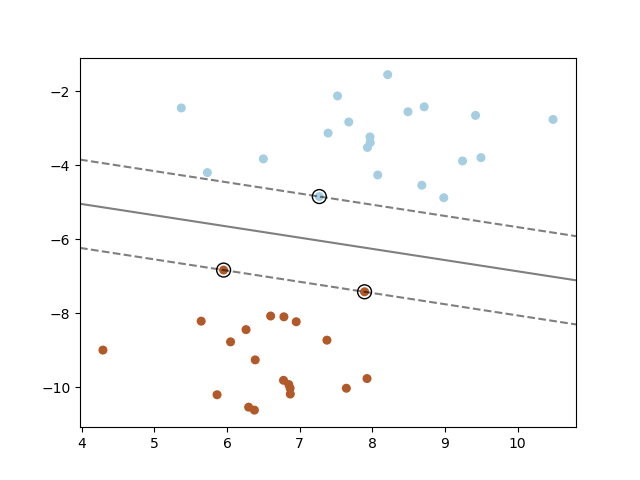

It is red so it has the class and we need to verify it does not violate the constraint So let's look at Figure 4 below and consider the point. On the following figures, all red points have the class and all blue points have the class. We won't select any hyperplane, we will only select those who meet the two following constraints: Now we want to be sure that they have no points between them. However, here the variable is not necessary. We can select two others hyperplanes and which also separate the data and have the following equations : Given a hyperplane separating the dataset and satisfying: Now if we add on both side of the equation we got :įor the rest of this article we will use 2-dimensional vectors (as in equation (2)). In our definition the vectors and have three dimensions, while in the Wikipedia definition they have two dimensions: You might wonder. Where does the comes from ? Is our previous definition incorrect ? įirst, we recognize another notation for the dot product, the article uses instead of. However, in the Wikipedia article about Support Vector Machine it is said that :Īny hyperplane can be written as the set of points satisfying. We saw previously, that the equation of a hyperplane can be written What do we know about hyperplanes that could help us ? Taking another look at the hyperplane equation We now want to find two hyperplanes with no points between them, but we don't have a way to visualize them. So let's assume that our dataset IS linearly separable. You can only do that if your data is linearly separable Figure 3: Data on the left can be separated by an hyperplane, while data on the right can't Moreover, even if your data is only 2-dimensional it might not be possible to find a separating hyperplane ! But with some -dimensional data it becomes more difficult because you can't draw it. Step 2: You need to select two hyperplanes separating the data with no points between themįinding two hyperplanes separating some data is easy when you have a pencil and a paper. The more formal definition of an initial dataset in set theory is : So your dataset is the set of couples of element We can say that is a -dimensional vector if it has dimensions. Moreover, most of the time, for instance when you do text classification, your vector ends up having a lot of dimensions. Note that can only have two possible values -1 or +1. Įach will also be associated with a value indicating if the element belongs to the class (+1) or not (-1). Most of the time your data will be composed of vectors. So we will now go through this recipe step by step: Step 1: You have a dataset and you want to classify it It is because as always the simplicity requires some abstraction and mathematical terminology to be well understood. If it is so simple why does everybody have so much pain understanding SVM ? The region bounded by the two hyperplanes will be the biggest possible margin. select two hyperplanes which separate the data with no points between them.If I have a margin delimited by two hyperplanes (the dark blue lines in Figure 2), I can find a third hyperplane passing right in the middle of the margin.įinding the biggest margin, is the same thing as finding the optimal hyperplane. If I have an hyperplane I can compute its margin with respect to some data point. Right now you should have the feeling that hyperplanes and margins are closely related. How did I find it ? I simply traced a line crossing in its middle. It is slightly on the left of our initial hyperplane. You can also see the optimal hyperplane on Figure 2. Figure 2: The optimal hyperplane is slightly on the left of the one we used in Part 2. The biggest margin is the margin shown in Figure 2 below. In Figure 1, we can see that the margin, delimited by the two blue lines, is not the biggest margin separating perfectly the data. However, even if it did quite a good job at separating the data it was not the optimal hyperplane.įigure 1: The margin we calculated in Part 2 is shown as M1Īs we saw in Part 1, the optimal hyperplane is the one which maximizes the margin of the training data. We then computed the margin which was equal to. How do we calculate the distance between two hyperplanes ?Īt the end of Part 2 we computed the distance between a point and a hyperplane.How can we find the optimal hyperplane ?.Here is a quick summary of what we will see: The main focus of this article is to show you the reasoning allowing us to select the optimal hyperplane. If you did not read the previous articles, you might want to start the serie at the beginning by reading this article: an overview of Support Vector Machine. This is the Part 3 of my series of tutorials about the math behind Support Vector Machine.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed